Artificial Intelligence in Medicine. Algorithm Precision, Physician Liability

March 12, 2026 / Irina Bustan

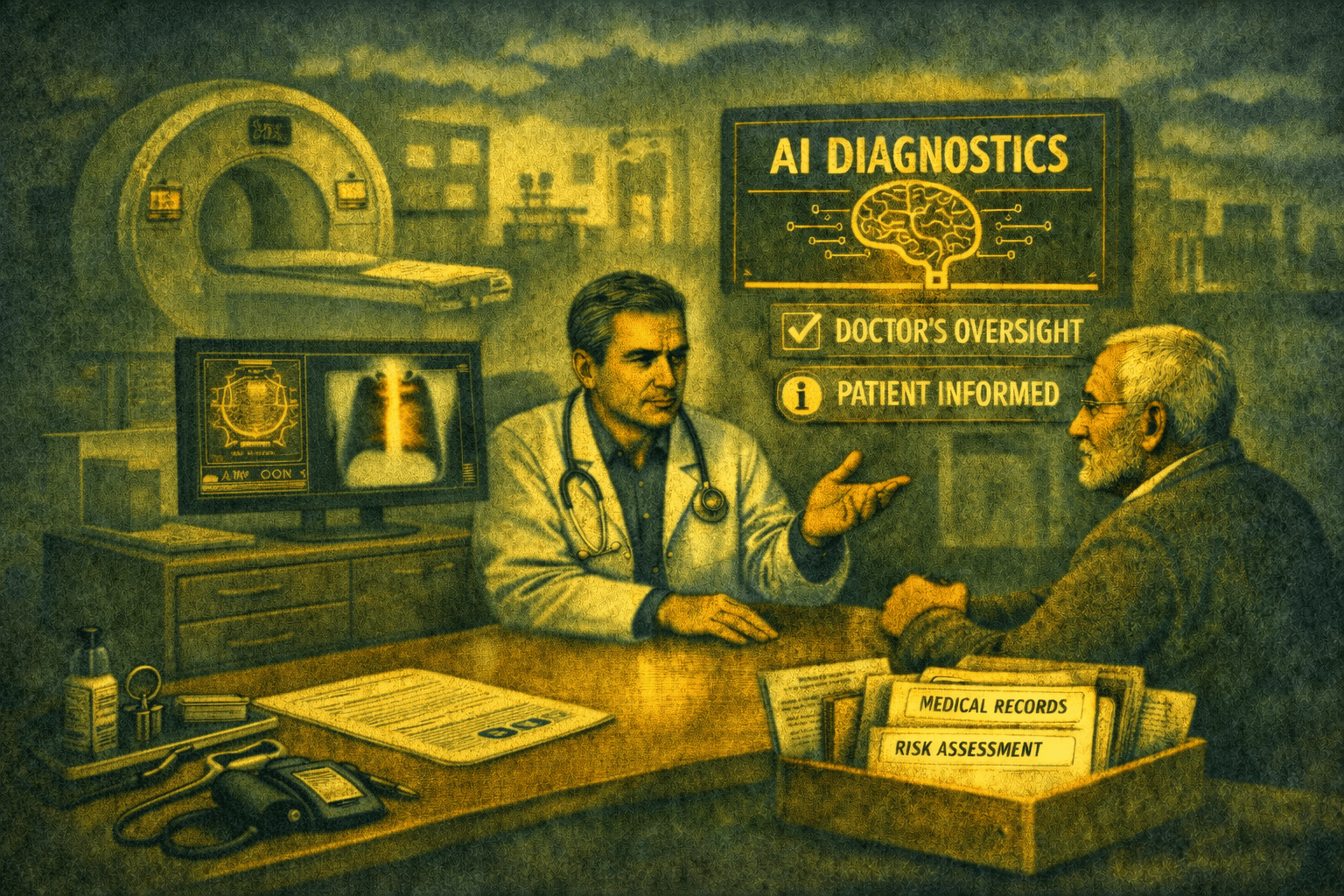

Artificial intelligence is already present in many medical facilities in Romania. It is used, among other things, in radiology for image interpretation, in laboratories for results analysis, and in triage for patient assessment. The benefits are evident: increased precision, reduced waiting and consultation times, a second pair of eyes in the diagnostic process, and much more.

And our clients are already leveraging them. We are not here to slow down innovation, but to ensure they can benefit from everything artificial intelligence has to offer, on solid legal ground for the patient, the physician, and the medical facility alike.

Because AI is not just a technical tool. It is a factor that can influence medical decisions, patient safety, and physician liability. This is precisely why Regulation (EU) 2024/1689 on artificial intelligence (AI Act) classifies most AI tools used in healthcare as high-risk systems.

And from 2 August 2026, compliance is no longer solely the responsibility of the AI system provider. A series of deployers, i.e. the medical facilities using these systems, comes into force under the Regulation as direct obligations. We will address the most important ones.

1. Human Oversight, More Than a Compliance Checkbox

Art. 14 of the AI Act requires the person overseeing an AI system to understand the system's output, interpret it correctly, and, if necessary, even disregard it.

The theory is clear. The practice, less so. For a physician to decide when to question an AI result or when to disregard it, they do not need to know how the algorithm works. That is not their role.

The physician needs to know the limitations of the AI system they work with: in which situations performance drops, which pathologies are detected poorly or not at all, where false negative rates are higher, how it behaves with atypical anatomies or overlapping pathologies.

Information that most likely exists in the provider's documentation, but rarely reaches the practicing physician. Most often, AI implementation comes with operational training: interface, workflow, integration into clinical processes. All necessary, but insufficient.

In reality, physicians learn how to use the system, but far less often learn when to be cautious. And without a real understanding of the system's limitations, human oversight risks remaining merely a compliance checkbox rather than a real control mechanism, with obvious consequences for physician liability.

If we want AI integration in medical devices to be sustainable – both clinically and legally – then information about limitations must be as visible as information about performance.

2. Patient Transparency: an Obligation, Not an Option

AI Act imposes transparency obligations when high-risk AI systems are used, a category that includes most AI systems used in healthcare.

Separately, GDPR requires transparency in cases of automated decisions. The two regulations do not exclude each other; they apply simultaneously.

Although it may seem like just another formality, in reality it covers a real legal risk; this is because a patient who later learns about AI use can challenge the validity of informed consent. From there to a malpractice accusation, the distance is short.

Moreover, AI Act provides for fines of up to 15 million euros or 3% of turnover for breaching the transparency obligation. And the most underestimated risk remains the loss of trust. A patient who feels something was hidden from them does not return, and spreads the word.

So, what does compliant disclosure actually entail in practice? There is no universal form, but there are questions that need to be clarified: Who communicates? At what point? How do we prove disclosure took place? What do we do if the patient refuses AI use? The answers do not come from the provider. The transparency framework is the responsibility of the medical facility.

3. The Documentation that Manages Risk

When liability for the AI tool used no longer belongs solely to the provider but also to the medical facility, it is the facility's duty to demonstrate, through documentation:

- that AI is used in accordance with the medical purpose and the provider's instructions,

- that risks are continuously assessed and monitored,

- that the medical decision belongs to the physician, and

- that internal procedures for AI use and concrete implementation mechanisms exist.

The questions below are a good starting point:

Do you know exactly which AI systems you use and what the risk level is for each? Do you have the documentation needed to demonstrate compliance in a regulatory inspection? Does the medical staff know not only how to use AI, but also when not to rely on it? Is the patient informed about AI use before learning about it elsewhere?

If the answer to any of these questions is not a firm "yes," the risks are already present. The deadline of 2 August 2026 is not flexible, and solid compliance documentation requires time, both for preparation and for implementation.

Working with AI algorithm auditing specialists, our team ensures compliance with the AI Act and provides medical facilities with the tools needed to manage legal risks arising from AI use in medical practice. Not through generic documentation, but tailored and customized for each medical facility, then effectively integrated into the clinical workflow.